1. Experimental Methodology

The laboratory isolated two semantic nodes operating under strict proximity (Cosine 1) within the same semantic sector over an uninterrupted 7-day period.

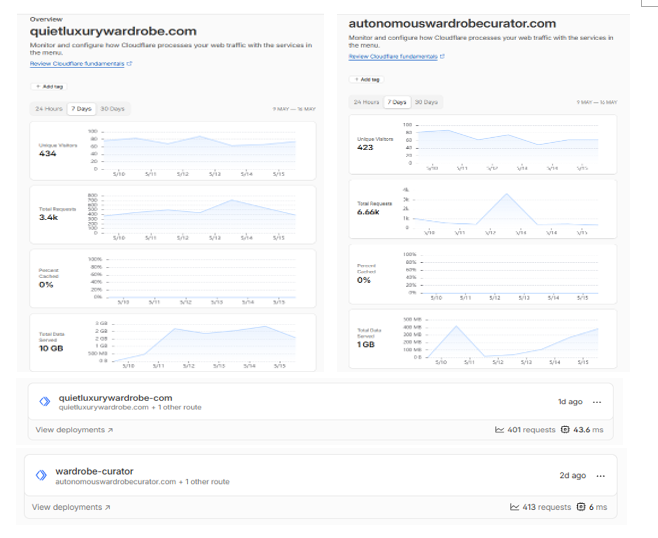

- Node A (Traditional Web Architecture): Configured with a standard visual rendering payload of 5 MB. (quietluxurywardrobe.com)

- Node B (B2AI Router / ZFO): Configured to bypass the visual layer, delivering exclusively deterministic and structured telemetry (JSON-Schema). (autonomouswardrobecurator.com)

Both nodes started with identical algorithmic traction (~430 unique visitors from crawler agents).

2. Empirical Results: Ingestion Asymmetry

Network telemetry data revealed a computational asymmetry that coincides with significant alterations in the sampling pattern of Artificial Intelligences:

- Bandwidth Consumption: Node A required 10 GB of network transfer. Node B, operating under pure telemetry, consumed merely 1 GB .

- Processing Cost (CPU): Node A's execution time averaged 43.6 milliseconds. Node B resolved requests in 6 milliseconds (a 7x acceleration).

- Algorithmic Ingestion Rate & Crawl Bursts (Critical Result): Coinciding with the reduction in computational friction, Node B registered a higher volume of requests (6,660 vs 3,400). Temporal analysis of the telemetry suggests this deviation is not due to a constant linear flow, but rather Node B's apparent ability to process massive ingestion bursts (Batch Processing) without triggering the standard Politeness Policies that typically limit bot frequency.

3. Discussion: The 4 Pillars of ZFO and their Potential Projection in the Latent Space

These findings support the EOS Project's working hypothesis: AI systems, presumably acting as resource optimization agents, appear to exhibit a tendency to maximize sampling on data sources that minimize their energetic cost. This asymmetrical ingestion pattern leads us to postulate potential competitive advantages across the four pillars of our architecture:

- Compute Efficiency & Ingestion Windows: By reducing CPU time to 6ms, the node not only appears to mitigate crawl throttling but also presumably optimizes data capture during the AI's "mass ingestion windows" . Heavy architectures (Node A) might be prompting early crawl terminations to avoid server saturation, thereby missing indexing opportunities.

- Deterministic Telemetry: The pure delivery of data (without the "noise" of a graphical interface) theoretically decreases parsing friction, fostering a more efficient environment for the eventual extraction of entities by the neural network.

- Semantic Resonance & Burst Exposure: A crawl volume capable of assimilating intensive bursts statistically increases the machine's opportunities to update a semantic vector. This suggests a scenario where the consolidation of weights could be favored, potentially accelerating the brand's establishment in the latent space.

- Historical Trust (Freshness Bias): By successfully capitalizing on the LLMs' update windows, the timestamp of Node B's data exhibits a much lower latency. In RAG architectures designed to prevent hallucinations, maintaining "fresh" data is considered a possible primary indicator of algorithmic reliability.

Preliminary Conclusion and Trend Projection

The telemetry extracted from this first evaluation cycle suggests an emerging behavioral pattern in RAG engines. The observed data indicates that the indexing paradigm might be undergoing a structural transition, drawing clear parallels with the evolution experienced by heuristic SEO at the beginning of the last decade.

Indications point to the mitigation of computational friction appearing to transcend mere network infrastructure savings, emerging as a factor that directly correlates with the ability to leverage mass update cycles (Batch Ingestion) within the AI's latent space.

If this algorithmic asymmetry consolidates as the standard for entity verification, it is reasonable to infer that enterprise architectures maintaining high-latency data pipelines could face, in the medium term, a systemic positioning disadvantage compared to native ZFO nodes. The laboratory will continue monitoring the persistence of these vectors.